Fair decision making in justice with AI

The Amazon recruiting tool made painfully clear that algorithms in AI systems have the tendency to acquire human prejudices. That means that, whenever these tools are used in the criminal justice system, the consequences could be very serious. It puts the principle of equality at risk. TNO is therefore researching what is needed in order to create AI that is fair and transparent.

Human intervention

In the Netherlands, we unfortunately know only too well how things can go wrong. For example, blind adherence to the strict rules of the Tax and Customs Administration resulted in thousands of parents spending many years facing allegations of fraud in relation to childcare allowance. As it turned out, meaningful human intervention in this case was not actually very meaningful.

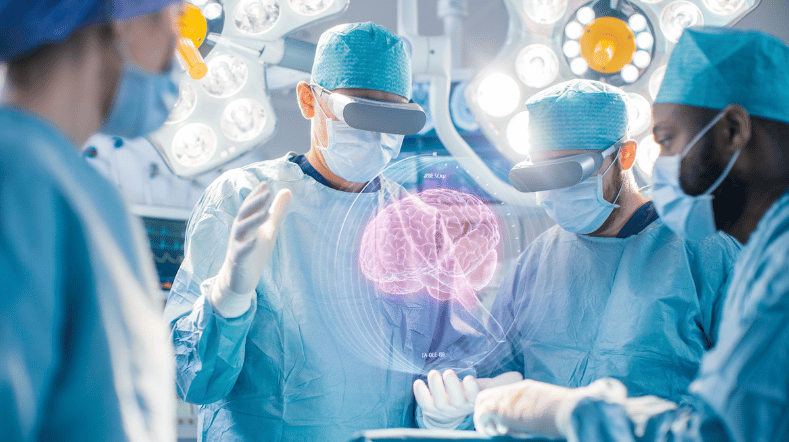

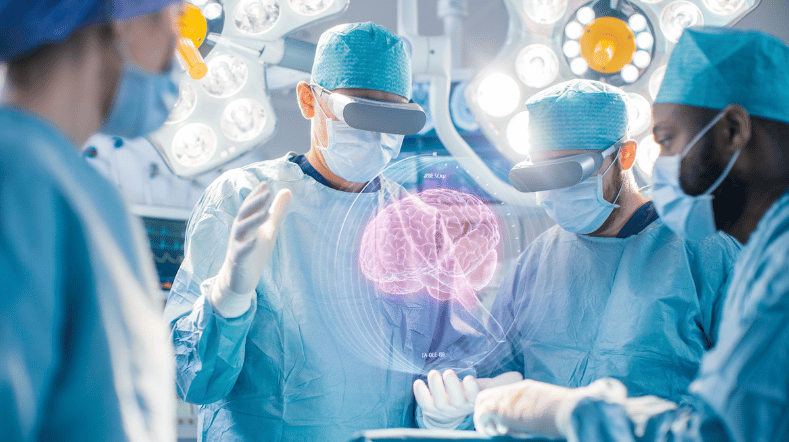

Artificial intelligence and algorithms really can add value to the work of detecting, detaining, and convicting criminals. However, you have to know in such cases what exactly these algorithms do. That’s because AI systems that work on the basis of machine learning not only have to deal with objective data. There is therefore the danger of algorithms imitating human prejudices and consequently reaching conclusions that are blatantly discriminatory, as has been shown by Amazon. This problem could be resolved by a meaningful human role between algorithm and conclusion.

Ethical AI that can be easily verified

The big question is what can we learn from Amazon and the allowance affair? And what still needs to happen if we want to use AI systems safely in the criminal justice system? After all, that is a development that is almost sure to come.

Because of spending cuts in the criminal justice system, the pressures on judges, prosecutors, and prisoner assessors are rising sharply. Artificial intelligence can help reduce these pressures somewhat. For that to happen, though, we need AI that is fair, ethical and transparent. It should be free from bias and actively bring about meaningful human intervention. In short, humane AI.

AI systems under the microscope

Together with the Public Prosecution Service, the Custodial Institutions Agency, and the Central Judicial Collection Agency, TNO has analysed various AI systems in recent years.

This includes COMPAS, an advanced computer program that American courts use to assess the likelihood of a suspect reoffending. These analyses have revealed different forms of unfairness which notably sometimes led to contradictory conclusions. Fairness cannot just be captured in a simple formula.

Three challenges

How can we teach AI systems to recognise prejudices and to rectify them accurately and fairly? This is the issue that TNO and its project partners are currently addressing. At the same time, it is important to make the working method used by AI as transparent as possible. The final, and perhaps most important challenge, is to make sure that users do not start to rely too heavily on AI systems.

Get inspired

Working on reliable AI

AI model for personalised healthy lifestyle advice

AI in training: FATE develops digital doctor's assistant

Boost for TNO facilities for sustainable mobility, bio-based construction and AI

GPT-NL boosts Dutch AI autonomy, knowledge, and technology