Towards Digital Life: A vision of AI in 2032

In 2022, TNO will celebrate its 90th anniversary. To mark this event, a number of experts from within our organisation have written about their future vision of AI. In this vision, these experts set out where they expect us to be regarding AI in ten years’ time. And they predict what the implications will be for industry, mobility, sustainability, our health, and research itself, amongst other things.

In 1945, when TNO was only thirteen years old, American thinker Vannevar Bush inspired the world with his visionary essay, As We May Think. Bush foresaw the rapid development of computers, the Internet, and even links between the brain and machines. This digitisation revolution has since become a reality; one that has unfolded almost entirely within the 90 years since TNO was founded. A revolution that TNO has also contributed to with an endless series of inventions.

The next wave of innovation has already presented itself in the shape of artificial intelligence (AI). We have developed algorithms that could potentially participate in our society as intelligent machines. Machines that will support humans, but that will also break free from the workplace and move through our world autonomously. Robots that will transport us, take care of us, defend us, entertain us, or be our companions.

This is an artificial form of intelligence (AI) that we, as humans, will have to relate to. As with many technological developments, the rise of AI arouses many emotions. On the one hand, we long to find out how AI can improve our lives. On the other, we fear that AI will disrupt our society and democracy. TNO predicts that AI will become ‘big’ in the next ten years. Big in the sense of societal and economic impact, but also in the sense of ‘maturity’ and its capacity to display responsible and moral behaviour.

Educating AI

Our society faces the unique challenge of making it clear to AI systems which goals we want to pursue as humans and the ethical values that should underpin the choices made by AI.

Innovation with AI

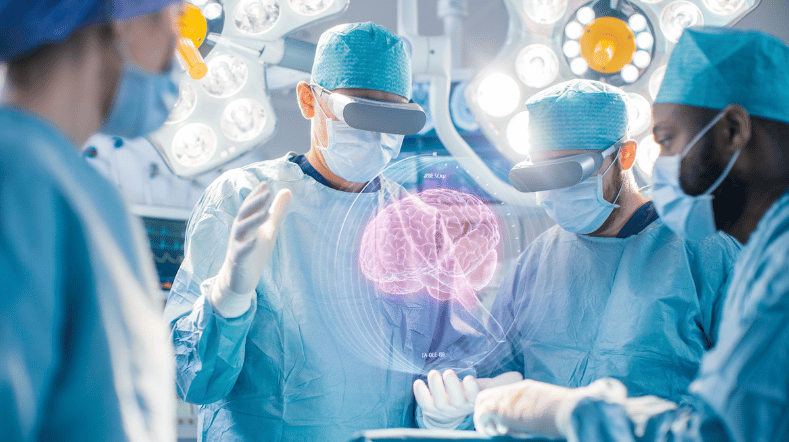

AI-driven innovation for business will lead to a 10% increase in the current European GNP by 2030. What does that world look like in concrete terms? In construction, healthcare and other sectors.

Innovating with innovation: how does AI advance science?

AI is changing the role of the researcher. The knowledge generated by AI will not be 'explanatory' in the coming decades. It does make connections, but it has no cause and effect. Creativity remains reserved for humans for the time being.

Download the vision paper

‘Towards Digital Life: A vision of AI in 2032'

Get inspired

Working on reliable AI

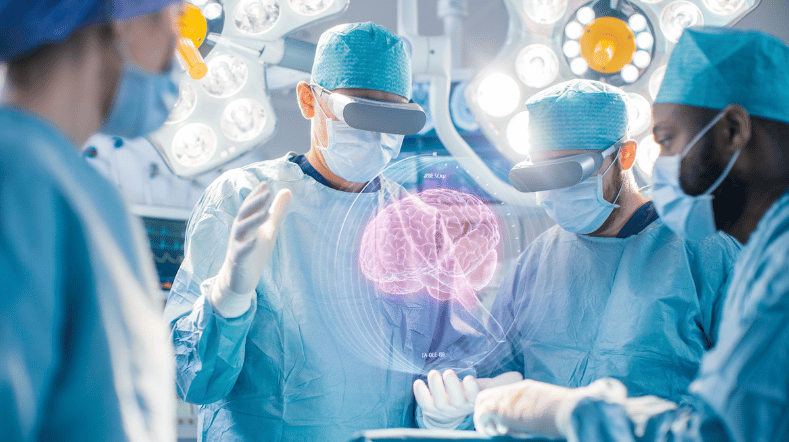

AI model for personalised healthy lifestyle advice

AI in training: FATE develops digital doctor's assistant

Boost for TNO facilities for sustainable mobility, bio-based construction and AI

GPT-NL boosts Dutch AI autonomy, knowledge, and technology